Bogie systems faults that signal bigger fleet problems

Dr. Alistair Thorne

Time

Click Count

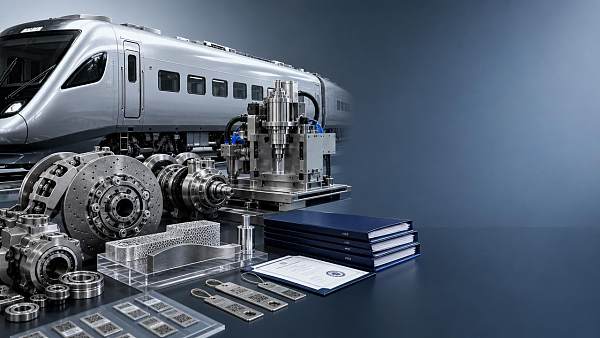

Recurring bogie systems faults often point to deeper risks across rolling stock, from track maintenance gaps to signaling systems integration and rail regulatory compliance. For EPC contractors, procurement directors, and technical evaluators, early diagnosis supports predictive maintenance, rail transit efficiency, and carbon-neutral rail goals while aligning with EN 50126, IEC 62278, and ISO/TS 22163 rail standards.

Why recurring bogie faults should never be treated as isolated defects

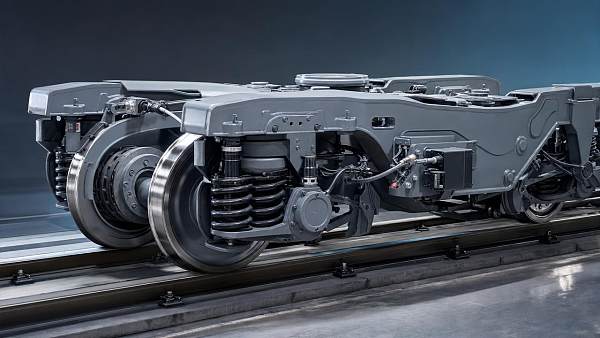

A bogie fault is rarely just a workshop issue. In rail operations, repeated wheelset vibration, abnormal bearing temperature, hunting instability, suspension fatigue, or brake interface wear often signals a wider fleet reliability problem. When the same symptom appears across 3–5 trainsets, or returns within one maintenance cycle of 30–90 days, decision-makers should look beyond component replacement and assess system interaction across track, vehicle dynamics, maintenance execution, and software-driven diagnostics.

For information researchers and technical evaluators, the key question is not only what failed, but what the fault pattern reveals. A bogie may show uneven wear because axle load distribution drifted outside acceptable maintenance tolerance. It may also be reacting to poor track geometry, wheel reprofiling inconsistency, lubrication gaps, or interface mismatch between new and legacy rolling stock. These conditions increase lifecycle cost long before they cause a visible service failure.

For commercial evaluators, recurring bogie systems faults influence more than spare parts spending. They affect availability guarantees, tender risk, depot labor planning, and warranty discussions between operator, EPC contractor, and supplier. In large rail programs, a 2–4 week delay in root-cause confirmation can push back fleet acceptance, extend temporary maintenance measures, and complicate compliance evidence for cross-border or regulator-led reviews.

This is where G-RTI adds value. By benchmarking mechanical, digital, and structural integrity across rolling stock and rail infrastructure, G-RTI helps procurement directors and engineering teams separate isolated parts failure from systemic fleet risk. That distinction matters when the decision is whether to replace a component batch, revise maintenance intervals, audit track conditions, or reassess supplier qualification against international rail standards.

- A single-event bogie fault may be maintenance-related; repeated faults across multiple units usually indicate system-level interaction.

- The most important early warning signs often emerge within 2–3 inspection cycles rather than at final failure.

- Procurement teams should link bogie fault data with track, signaling, depot process, and supplier performance records.

What usually sits behind the symptom

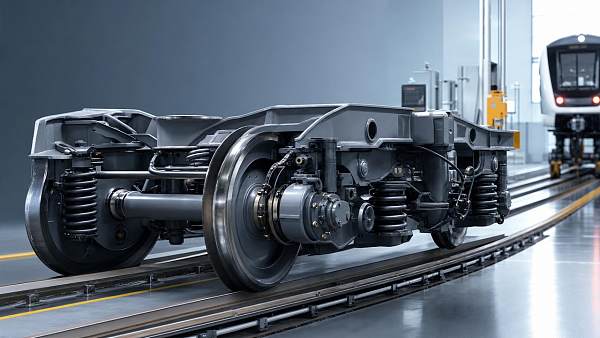

Bogie systems sit at the intersection of wheel-rail contact, structural loading, braking, ride quality, and onboard monitoring. Because of that, they often become the first subsystem to expose deeper weaknesses elsewhere. A cracked primary suspension element may indicate repetitive dynamic overload. Frequent yaw damper replacement may point to route conditions the original duty cycle did not fully consider. Abnormal flange wear may show a mismatch between wheel maintenance and track curve behavior rather than poor bogie design alone.

In high-speed rail, urban metro, and mixed-traffic corridors, these interactions become more complex. Vehicles moving between depot conditions, tunnel sections, elevated structures, and variable track quality may produce fault signatures that look mechanical but are driven by infrastructure or operational change. That is why fault interpretation should combine vehicle data, route data, and maintenance records over at least 6–12 months where possible.

Which bogie fault patterns often indicate bigger fleet problems?

Not every bogie issue deserves the same escalation path. Some defects remain local to a component batch, while others point to systemic risk affecting a route, an asset class, or a maintenance regime. For technical and business assessment teams, identifying the pattern early helps prioritize whether the next step should be a depot correction, a network-wide audit, or a supplier review.

The table below summarizes common bogie systems faults and what they may indicate beyond the immediate repair action. These are not universal conclusions, but they provide a practical screening tool during fleet benchmarking, tender due diligence, and maintenance strategy review.

The operational meaning of these patterns is clear: if the same bogie fault recurs under similar duty conditions, the right response is structured diagnosis, not serial replacement. A fleet that repeatedly consumes the same spare part may actually be showing a route, process, or compliance management issue. That is why root-cause review should include at least 4 data layers: maintenance history, route conditions, component genealogy, and onboard event records.

Three fault clusters that deserve immediate escalation

First, thermal anomalies must be escalated quickly. Bearing temperature excursions, repeated sensor alarms, or unexplained hotspots can move from nuisance events to safety risk in a short period. Second, instability-related symptoms such as abnormal vibration, ride deterioration, or yaw behavior require cross-functional review because they often involve both vehicle and track. Third, fatigue-related findings, including cracks in frames, brackets, or suspension mounts, demand strict traceability and batch assessment.

For distributors and agents, these clusters also influence inventory planning. A local stock increase may solve the short-term service need, but if the fleet issue is systemic, buyers will soon shift from spare part orders to engineering investigation, redesign support, or alternative sourcing. Understanding that transition is commercially important.

A practical screening checklist

- Check whether the same fault appears on more than one route, depot, or trainset family within 1–2 quarters.

- Compare component replacement timing against expected maintenance intervals rather than against emergency stock availability.

- Review whether the event aligns with track works, software upgrades, wheel reprofiling changes, or supplier batch transitions.

- Escalate early if evidence touches safety margins, reliability guarantees, or regulator-facing documentation.

How to distinguish a bogie component defect from a system integration problem

This distinction is central to both technical troubleshooting and procurement judgment. A defective component usually shows a batch-related, material-related, or manufacturing-related signature. A system integration problem appears when bogie behavior changes according to route, speed profile, train control logic, braking coordination, or maintenance execution. The visible fault may be identical, but the remedy, liability, and budget impact are very different.

For example, abnormal brake-related wheel damage may seem to point to wheelset quality. Yet in practice, the trigger may sit in braking software thresholds, adhesion conditions, or inconsistent calibration between onboard and wayside systems. Likewise, fast damper deterioration can originate from route dynamics, not just damper design. In these cases, focusing procurement on unit price alone creates repeat failure cost later.

G-RTI’s benchmarking perspective is especially useful here because bogie performance cannot be judged in isolation. Mechanical hardware, digital monitoring, route conditions, and maintenance discipline all shape the outcome. For procurement directors managing international sourcing, this matters even more when one supplier manufactures in Asia while the fleet must satisfy European, American, or Middle Eastern regulatory expectations.

A structured comparison matrix helps teams avoid premature conclusions. It also clarifies whether the next commercial action should be warranty enforcement, route-side inspection, operational adjustment, or a broader technical audit.

The table shows why a low-cost replacement decision can become expensive if the diagnosis is wrong. In B2B rail procurement, the real cost driver is not only part price but also downtime, repeat inspection, depot resource diversion, and acceptance delay. A buyer who identifies the failure type correctly during the first 2–4 weeks can often avoid months of fragmented corrective action.

Where signaling and digital monitoring enter the picture

Bogie systems faults are sometimes amplified by poor data coordination rather than pure mechanics. Event logs, braking commands, traction response, and condition monitoring outputs can reveal whether the bogie is reacting to a control sequence rather than originating the fault. In CBTC or ETCS-linked environments, the value of synchronized time-series analysis becomes especially high when vibration, wheel slide, or thermal alarms coincide with operating mode transitions.

This is also one reason G-RTI covers not only rolling stock hardware but signaling, track maintenance, and traction power supply. Fleet problems rarely stay inside one discipline. Decision-makers need an integrated view, especially when contracts, liability, and compliance review depend on it.

What procurement and technical teams should verify before faults scale across the fleet

When bogie systems faults start recurring, procurement teams should avoid jumping straight to reordering. The smarter sequence is to verify 5 core dimensions: configuration fit, maintenance interval realism, standards alignment, traceability, and support responsiveness. This is where business assessment and engineering assessment must work together rather than in parallel silos.

For distributors, agents, and sourcing teams, supplier conversations should move beyond brochure claims. Ask how the component or subsystem performs under specific duty cycles, what inspection windows are expected, how batch traceability is managed, and which standards documentation is available for interface review. In practice, a difference of 10–15% in upfront price can be outweighed by depot interventions, route restrictions, or documentation gaps during acceptance.

The procurement guide below is designed for rolling stock buyers, EPC contractors, and evaluators who need a practical decision framework when bogie-related faults affect supplier selection, replacement planning, or technical clarification rounds.

The priority is to treat bogie fault analysis as a sourcing and lifecycle issue, not only a maintenance issue. If a supplier cannot explain route-fit assumptions, traceability depth, and response workflow, procurement risk rises. For large fleet projects, this can affect not only cost but also contract certainty and downstream regulator engagement.

A 4-step response path for buyers and evaluators

- Collect evidence from at least 3 sources: maintenance logs, route conditions, and component traceability records.

- Separate immediate operational containment from long-term root-cause work.

- Validate supplier explanations against standards obligations and actual duty-cycle assumptions.

- Use benchmarking to compare whether the issue is project-specific, supplier-specific, or network-wide.

This process is particularly relevant where multiple jurisdictions or tender frameworks apply. A fault that appears manageable in one market may become a major compliance concern in another if documentation, validation, and RAMS evidence are incomplete.

How standards, compliance, and predictive maintenance reduce fleet-level exposure

Standards do not eliminate bogie systems faults, but they improve how faults are anticipated, documented, and escalated. EN 50126 and IEC 62278 are closely associated with lifecycle-oriented RAMS thinking, while ISO/TS 22163 supports process quality across the rail supply chain. For operators and EPC contractors, the practical value lies in disciplined evidence: requirements traceability, maintenance planning, supplier process control, and clearer change management.

In recurring bogie fault cases, predictive maintenance becomes most effective when it uses both condition data and engineering context. Temperature trends, vibration signatures, mileage intervals, and inspection findings should be interpreted together. A sensor alert every few weeks is useful, but a sensor alert correlated with curve-heavy service, a recent wheel reprofiling event, and a supplier batch change is far more actionable.

For rail organizations pursuing carbon-neutrality and higher fleet availability, this matters because reactive maintenance wastes labor, materials, and route capacity. Predictive maintenance can reduce unnecessary replacements only if the underlying asset hierarchy is understood. Otherwise, digital monitoring simply accelerates alarm generation without improving decisions.

G-RTI’s cross-pillar perspective supports this transition. Because the platform benchmarks rolling stock, track infrastructure, signaling, and traction systems together, users gain a stronger basis for deciding whether a bogie fault belongs in the depot, on the route, in supplier qualification, or in system integration review. That helps prevent fragmented actions across 3 separate departments that should be working from one evidence chain.

Common compliance mistakes that slow correction

- Treating repeated bogie alarms as maintenance noise without linking them to route condition or operational mode.

- Documenting the repair action but not the failure pattern over 6–12 months.

- Assuming supplier conformity paperwork alone proves route suitability and integration robustness.

- Separating engineering evidence from commercial evaluation, which delays claims and corrective decisions.

What a stronger monitoring framework looks like

A useful framework includes 4 recurring checkpoints: anomaly detection, technical correlation, route or system verification, and commercial response. That means teams review not only whether a bearing ran hot or a suspension degraded, but also whether the event appeared under repeatable conditions, whether maintenance action solved it, and whether the supplier or integrator needs to update technical assumptions. In most fleets, a monthly review cycle combined with a quarterly cross-functional review is more effective than waiting for a major incident.

Such a framework also improves communication with regulators, insurers, and project stakeholders. Instead of reporting individual failures, the organization can show a controlled process for identifying trends, managing risk, and validating corrective action.

FAQ: practical questions from rail buyers, evaluators, and channel partners

How many recurring bogie faults should trigger a fleet-level review?

There is no universal threshold, but a fleet-level review becomes reasonable when the same symptom appears across 3 or more units, across more than one depot, or repeats within 1–2 maintenance cycles after corrective action. Safety-related thermal events, structural cracking, or instability should be escalated earlier even if the count is lower.

Are bogie faults usually caused by the bogie itself?

Not always. Bogie systems faults may originate in wheel-rail interaction, brake behavior, track geometry, maintenance quality, control logic, or configuration mismatch. The bogie often exposes the problem first because it sits at the interface between the vehicle and the infrastructure.

What should procurement teams ask suppliers when faults keep recurring?

Ask for configuration assumptions, route-fit boundaries, traceability records, engineering response times, and documentation aligned to project standards. Also ask whether similar duty-cycle issues have required maintenance interval changes or integration review in other projects. The goal is not only to secure a replacement part, but to confirm whether the solution will remain stable over the next 6–12 months.

How long does structured root-cause review usually take?

An initial containment assessment may take 48–72 hours for urgent cases. A meaningful technical review often takes 2–4 weeks if route, maintenance, and supplier data are available. A broader fleet-level and contractual assessment can take longer, especially where multiple suppliers, depots, or regulatory interfaces are involved.

Why work with G-RTI when bogie faults start affecting procurement, compliance, and fleet confidence

When recurring bogie systems faults begin to signal bigger fleet problems, rail stakeholders need more than a fault log. They need benchmarking, regulatory context, cross-discipline analysis, and commercially useful interpretation. G-RTI is built for that role. Our platform connects rolling stock integrity with track maintenance, signaling, traction, and international market requirements so teams can move from symptom management to evidence-based decision-making.

For information researchers, we provide structured intelligence that clarifies whether a bogie issue is local, repeatable, or market-relevant. For technical evaluators, we support deeper review across mechanical performance, digital monitoring, and standards alignment. For business evaluators and channel partners, we help interpret how recurring faults affect sourcing risk, tender positioning, lifecycle cost, and supplier comparison in Europe, the Americas, the Middle East, and Asia-linked supply chains.

You can contact G-RTI to discuss parameter confirmation, bogie system selection logic, standards mapping, delivery-cycle expectations, maintenance strategy implications, and compliance documentation needs. We can also support comparative evaluation of suppliers, route-fit review, spare parts planning logic, and technical-commercial clarification for ongoing rail projects.

If your team is assessing recurring wheelset, suspension, bearing, or stability-related issues, start with a focused inquiry. Share the fault pattern, operating context, project standard requirements, and current supplier setup. From there, G-RTI can help frame the right next step: component review, integration assessment, maintenance optimization, or broader fleet benchmarking.

Search News

Industry Portal

Hot Articles

Popular Tags

- rail infrastructure

- traction power supply

- rail procurement

- rail supply chain

- ISO/TS 22163

- IEC 62278

- EN 50126

- rail regulatory compliance

- high-speed rail

- urban metro

- signaling systems

- CBTC

- ETCS

- track maintenance

- traction power

- rolling stock

- bogie systems

- predictive maintenance

- EPC contractors

- rail standards

- carbon-neutral rail

- rail transit efficiency

Recommended News

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.