Rail Technical Standards That Often Delay Fleet Upgrades

Dr. Alistair Thorne

Time

Click Count

Fleet upgrades rarely fail because operators lack a modernization roadmap. They fail because rail technical standards reveal hidden incompatibilities that are expensive to fix late: signaling interfaces that do not align with the network, fire safety documentation that does not match the target market, software change control that cannot pass assurance, or cybersecurity controls that were never designed for rolling stock certification. For technical evaluators, the practical question is not whether standards matter, but which ones most often create approval delays and how to identify those risks before procurement, retrofit, or testing begins.

Across international rail programs, the standards that slow fleet upgrades most often fall into a few predictable categories: interoperability, safety assurance, software and electronics validation, cybersecurity, fire protection, accessibility, environmental testing, braking performance, and maintainability evidence. The real bottleneck is rarely a single clause in a standard. It is usually the interaction between legacy vehicle architecture, national rules, supplier documentation quality, and the evidence required by assessors, infrastructure managers, and regulators.

For technical assessment teams, that means a better evaluation approach is more valuable than a longer checklist. The goal is to determine early whether a fleet upgrade is merely an engineering integration task or a full compliance re-qualification exercise. That distinction drives timeline, cost, testing scope, and supplier risk. In practice, the fastest-moving upgrade programs are those that map standards to design changes at the concept stage, not after prototype completion.

Why do rail technical standards delay fleet upgrades more than expected?

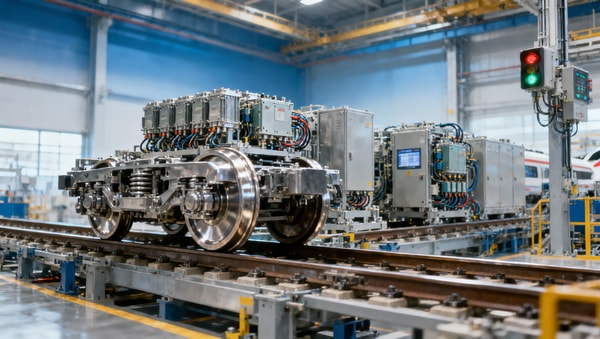

The biggest misconception in fleet modernization is that replacing or improving a subsystem automatically remains within the original certification envelope. In reality, many upgrade packages affect multiple compliance domains at once. A new traction converter may change electromagnetic compatibility behavior. A passenger information system refresh may introduce networked software that triggers cybersecurity review. A brake system modification may require renewed demonstration of stopping performance, wheel-slide interaction, and fail-safe behavior.

Technical evaluators often encounter delays because standards are applied as if they were independent workstreams. They are not. A change assessed under one standard can trigger new evidence requirements under several others. For example, introducing digital train control interfaces may touch EN 50126 lifecycle processes, EN 50128 software assurance, EN 50129 safety case expectations, and network-specific interoperability rules. If that chain is not recognized early, testing plans and documentation packages quickly become incomplete.

Another source of delay is market transfer. A fleet or subsystem that performs well in one region may still face significant obstacles in Europe, North America, or the Middle East because local authorities, infrastructure managers, and project owners require additional proof beyond the manufacturer’s baseline compliance claim. This is exactly where technical benchmarking becomes critical: not just checking whether a product was built to a standard, but whether its evidence package is acceptable for the target corridor and operating context.

Which standards most commonly become bottlenecks during upgrades?

For most rolling stock and transit upgrade programs, the highest-risk standards clusters are well known. The first is the RAMS and lifecycle assurance family, especially EN 50126 and related safety validation frameworks. These standards do not simply ask whether a component works. They require traceable proof that reliability, availability, maintainability, and safety were managed through the full change process. Many delayed projects are not technically unsound; they are evidentially weak.

The second bottleneck area is software and programmable electronics, particularly EN 50128 and EN 50129 in applicable environments, along with project-specific software assurance requirements. Legacy fleets were often not designed with modern code traceability, configuration management, cybersecurity hardening, or formal change impact analysis in mind. When upgrades introduce new onboard software, gateways, remote diagnostics, or condition monitoring tools, compliance effort can expand far beyond the apparent size of the change.

Interoperability standards are another major source of delay, especially where fleets must integrate with ETCS, CBTC, platform systems, power supply constraints, or mixed-traffic infrastructure. A technically capable onboard solution can still be delayed if interface definitions are incomplete, national technical rules remain unresolved, or legacy architecture lacks the physical and logical separation required for safe integration. In these cases, the standard itself is only part of the problem; the interface management discipline is equally important.

Fire safety standards and material compliance requirements also routinely affect upgrade schedules. Interior refurbishments, cab redesigns, cable replacement, HVAC modification, battery enclosure changes, or underframe equipment additions can all trigger renewed assessment under applicable fire performance rules such as EN 45545 in many markets. The delay often comes from material traceability gaps, outdated supplier declarations, or insufficient test evidence for assemblies rather than individual materials.

Cybersecurity has become one of the fastest-growing causes of program friction. Standards such as IEC 62443-derived practices and rail-specific cybersecurity frameworks increasingly shape acceptance expectations, even where contractual language is still evolving. Adding train-to-ground connectivity, remote monitoring, onboard Ethernet networks, or software update capability introduces a security assurance burden that older fleets were not designed to accommodate. Technical evaluators need to treat cybersecurity as an engineering acceptance issue, not a future IT enhancement.

Where do technical evaluators usually underestimate compliance risk?

The most common blind spot is assuming that equivalent performance means equivalent compliance. A replacement traction motor, brake controller, door system, or bogie component may meet or exceed the old system’s functional performance, but if it changes operating loads, failure modes, thermal characteristics, software behavior, or maintenance logic, the original approval basis may no longer hold. Standards-based review must focus on change impact, not just nominal specification equivalence.

A second blind spot is incomplete configuration baseline control. Fleet upgrades often involve mixed vehicle conditions across production batches, maintenance histories, and previous modifications. If the evaluator does not establish exactly which baseline is being upgraded, compliance evidence quickly becomes fragmented. This problem is especially serious in long-life fleets where documentation has passed through multiple owners, depots, or suppliers. Standard delays are frequently document-control delays in disguise.

Third, teams often underestimate the effort required to prove compatibility with infrastructure rather than just vehicle compliance in isolation. Pantograph changes, axle load impacts, EMC behavior, signaling antenna placement, wheel profile changes, braking curves, and radio interfaces all have infrastructure consequences. If infrastructure manager approval pathways are not built into the upgrade plan from the start, certification can stall even after successful vehicle-level tests.

A further risk lies in overreliance on supplier declarations without auditing the underlying evidence quality. A vendor may claim alignment with rail technical standards, but technical assessment teams need to verify the scope, edition, test conditions, exclusions, and certification body recognition behind that claim. Delays arise when discovered late that compliance was partial, based on outdated standards versions, or valid only in another operating environment.

How do signaling and communication standards create the longest delays?

Signaling-related upgrades are among the most delay-prone because they involve safety, interoperability, software, telecommunications, and infrastructure interface management simultaneously. Whether the project concerns ETCS retrofitting, CBTC migration, train detection compatibility, onboard radio replacement, or data gateway integration, the number of dependent approvals is high. A single unresolved interface can stop dynamic testing, and without dynamic testing the wider approval schedule collapses.

One recurring problem is timing mismatch between rolling stock readiness and wayside readiness. A fleet may be technically upgraded for a new signaling regime, but if trackside baselines, radio coverage plans, balise data, or operational scenarios are not stabilized, validation evidence remains incomplete. Technical evaluators should therefore assess not just onboard compliance, but program synchronization risk across the entire rail system.

Another issue is legacy architecture incompatibility. Older fleets may lack the space, power quality, cooling capacity, cable routing, antenna location, or EMC segregation needed for modern signaling equipment. Retrofitting around these constraints often triggers redesign loops. The standards themselves do not create the physical limitation, but they formalize performance and assurance thresholds that cannot be waived easily.

Documentation depth is also more demanding in signaling than in many mechanical upgrade domains. Hazard logs, interface specifications, software verification records, independent assessment evidence, and operational rule alignment all need to be coherent. If any part of that package is inconsistent, assessors may request repetition of analysis or testing. That is why signaling upgrades should be screened first during technical due diligence, even if they represent only one part of the fleet modernization scope.

Why do software, electronics, and cybersecurity standards now matter as much as mechanical ones?

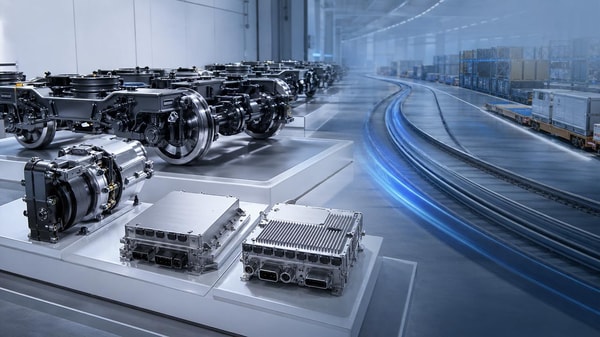

Historically, many fleet upgrade decisions were driven by visible hardware constraints: propulsion, doors, HVAC, braking, bogies, or structural fatigue. Those remain important, but modern rail programs increasingly fail schedule gates because of invisible system complexity. New software layers, vehicle networks, sensors, remote diagnostics, and digital maintenance tools create assurance obligations that are harder to close quickly than conventional hardware replacement.

Software-related standards matter because every functional change needs traceability from requirement to verification. If a supplier cannot demonstrate controlled development, version management, test coverage, and safety impact analysis, assessors may reject the evidence package regardless of how well the feature performs in demonstration. For technical evaluators, the quality of the software process is now part of the product itself.

Cybersecurity has a similar effect. Once a fleet is connected for monitoring, updates, passenger systems, or traffic management integration, the technical question becomes broader than uptime. Evaluators must ask whether the architecture supports segmentation, authentication, patch governance, incident response, and secure maintenance access. These are no longer purely operational concerns; they influence acceptance, insurability, and lifecycle risk.

This shift is especially relevant for cross-border procurement and upgrade programs where suppliers come from different engineering cultures and documentation practices. A technically advanced subsystem from a capable manufacturer may still face delay if its cybersecurity and software evidence does not align with the target authority’s review expectations. Benchmarking documentation maturity is therefore just as important as benchmarking equipment performance.

How can technical assessment teams identify likely delays before procurement or retrofit starts?

The most effective method is to begin with a standards-to-change impact matrix rather than a generic compliance list. Every proposed modification should be mapped against affected systems, applicable standards, required evidence, interfaces, retest needs, and approving entities. This quickly shows whether the project is dealing with simple replacement, functional enhancement, safety-relevant architecture change, or market reauthorization. Without this mapping, timelines are usually optimistic by default.

Assessment teams should also separate three questions that are often blurred together: Is the design technically feasible? Is it standards-compliant? Is the evidence package approval-ready? A “yes” to the first does not guarantee the second, and a “yes” to the second does not guarantee the third. Many delayed upgrades are caught between these layers, especially when suppliers are strong at engineering delivery but weaker at regulatory packaging.

Another practical step is to run an early documentation audit before final vendor down-selection. This should review certificates, test reports, software lifecycle records, materials declarations, hazard analysis, RAMS evidence, maintenance assumptions, and prior approval references. The objective is not to duplicate full conformity assessment, but to expose evidence gaps while the design can still be adjusted at manageable cost.

Technical evaluators should also ask whether the target network imposes local or operator-specific requirements beyond international norms. In many rail markets, compliance is not determined by EN, IEC, ISO, or other reference standards alone. National technical rules, operator maintenance philosophies, depot constraints, climate loads, and operational scenarios can all reshape acceptance expectations. These factors should be built into evaluation criteria from the start.

What should be prioritized to shorten certification timelines?

If the aim is to reduce delay, prioritization matters more than volume of analysis. First, focus on interfaces that can block dynamic testing: signaling integration, braking performance, EMC, traction power compatibility, train communication networks, and safety-critical software. If these remain unresolved, downstream progress is limited regardless of completion in lower-risk work packages.

Second, prioritize evidence integrity. Certification bodies and assessors can work through technical complexity if the submission is coherent, traceable, and complete. They struggle when reports use inconsistent baselines, standards editions are unclear, software versions do not match test records, or material declarations cannot be tied to installed parts. Strong evidence management frequently saves more time than incremental engineering optimization.

Third, prioritize supplier capability in regulated delivery, not only product capability. A manufacturer may offer strong hardware performance and competitive cost, but if it lacks experience with the target market’s approval structure, the project absorbs that risk. For international rail programs, technical assessment should include the supplier’s ability to support independent assessment, maintain controlled documentation, and respond quickly to nonconformity findings.

Finally, treat lifecycle maintainability as part of compliance planning. Standards-related delay does not end at entry into service. If spare parts traceability, maintenance intervals, software support obligations, obsolescence plans, and inspection methods are weak, acceptance authorities and operators may push back on long-term suitability. For fleet upgrades, the approval case is stronger when long-life support is demonstrably engineered, not assumed.

A practical decision framework for evaluating rail technical standards risk

For technical evaluators, a useful framework is to score each upgrade package across five dimensions: safety criticality, interoperability impact, software or cybersecurity exposure, infrastructure dependency, and evidence maturity. Projects that score high in three or more of these areas are likely to experience schedule pressure unless managed through staged validation and early assessor engagement.

The next step is to classify each subsystem as one of four types: like-for-like replacement, bounded functional modification, architecture-level change, or cross-network adaptation. This classification helps determine how deeply standards review must go. A bounded replacement may need limited revalidation, while an architecture-level or cross-network change often requires a broader safety case update and more extensive demonstration activity.

It is also valuable to distinguish recoverable gaps from structural gaps. Recoverable gaps include missing test records, outdated declarations, or incomplete traceability that can be fixed with disciplined document recovery. Structural gaps involve vehicle design limitations, incompatible interfaces, unsegregated networks, or insufficient physical accommodation for compliant equipment. Structural gaps are the ones that most often turn a planned upgrade into a delayed redesign.

Organizations that manage this well typically combine technical benchmarking, standards intelligence, and supply-chain scrutiny. They do not ask only whether a subsystem complies in theory. They ask whether it can be integrated, validated, maintained, and accepted in the target operating environment with credible evidence and realistic lead times. That is the level of discipline increasingly required in global rail modernization.

Conclusion

The rail technical standards that most often delay fleet upgrades are not random obstacles. They are predictable pressure points where old fleet assumptions meet new safety, interoperability, software, cybersecurity, and lifecycle requirements. For technical assessment teams, the key insight is that delay usually comes less from the existence of standards than from late recognition of how a proposed change affects multiple compliance domains at once.

The most effective response is early, structured evaluation: map the change against applicable standards, verify evidence maturity before commitment, test interfaces before full rollout, and judge suppliers by approval readiness as well as engineering quality. When technical evaluators work this way, fleet modernization becomes far more manageable. Certification timelines improve, retrofit risk falls, and long-term fleet performance is protected across complex international rail programs.

Search News

Industry Portal

Hot Articles

Popular Tags

Recommended News

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.